Distribute the love

It’s Wednesday morning and you are feeling good as you turn up for work so you decide to treat yourself to a coffee from the 7th floor meeting room, you know, the one with the posh coffee machine. You’re just starting to wonder if you pushed the right button because you cant hear anything, but then the coffee starts streaming out and you realise that unlike the one in the cafeteria downstairs, this one doesn’t come with a fake grinding noise thats meant to convince you that the coffee is freshly ground (even though in 2 years you’ve never seen the level of the beans change in the box on-top). This is the good stuff, its looking creamy and you haven’t even put the milk in yet. Just as you are admiring the fine china, Burnsy walks in. ‘Oh hello’ he says, eyebrows climbing, ‘what are you doing in here..?’

Burnsy is the Intrusion Detection Specialist at the firm and you are starting to wonder if this coffee machine is reserved for wealthy clients. To forestall any awkward explanations, you tell him all about the Great Negotiator.

“..and today I’ll be demo-ing it to the Board at 1pm!’ you finish off triumphantly.

“Good job!” says Burnsy. “I assume you’ve stress-tested it to confirm its performance when 1000’s of customers are using it simultaneously to place orders?”

Err..”Pass me the milk, Sir” you say brightly.

It’s back just in time for Standup so you’ve got plenty of opportunity to sip your coffee while good ol’ Dmitry bangs on about the bleeding obvious but you have to admit you are inwardly chafing a little bit. However bigger challenges lie ahead as no sooner have you sat down and dropped in a couple of Timestamp fields into your design (so you can calculate how long it took for the Negotiator to process a customer order), than you are wondering about 3 things

- You don’t want the Negotiator spending time calculating its own performance statistics when it should be concentrating on customer requests.

- You probably want an almost realtime report on how well the Negotiator is doing in terms of servicing the customer orders, so you can react if things aren’t going well.

- Sounds like you need another service to monitor the Negotiator service.

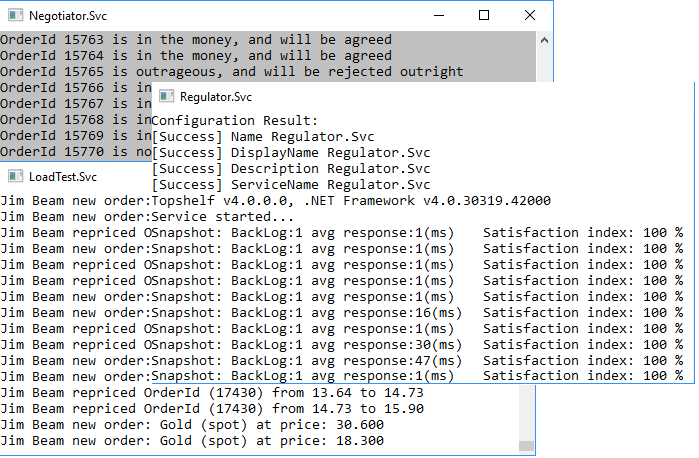

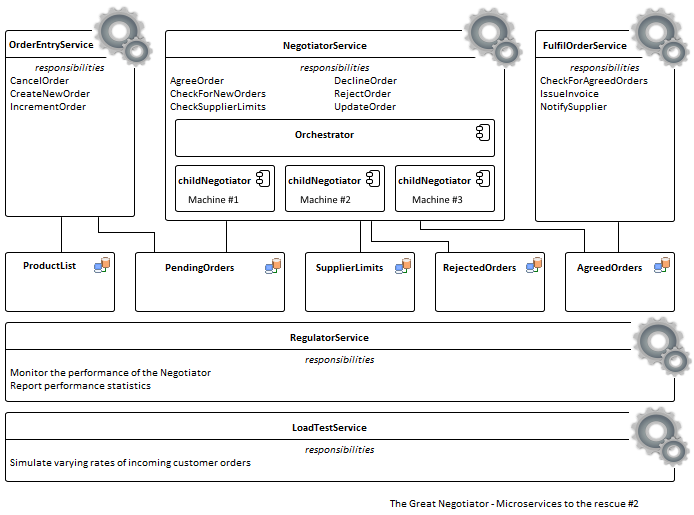

So, what are you going to call this new ‘service’ that ‘Monitors’ the Negotiator service? Why, the Regulator service, obviously. You are also going to need a LoadTest service to simulate all those thousands of customer orders, to see how your solution performs under load. Your platform of 3 microservices has just become 5 microservices. Now this is ok because you just click through CodeTrigger to generate the new services, and then paste in some code to define the load test. You define some metrics, including a SatisfactionIndex with the customer 100% satisfied if their order is processed (or at least responded to) within half a second (i.e. before they even take their thumb off the button). Running this initially with a simulated scenario of 500 orders a second, gives you the following feedback.

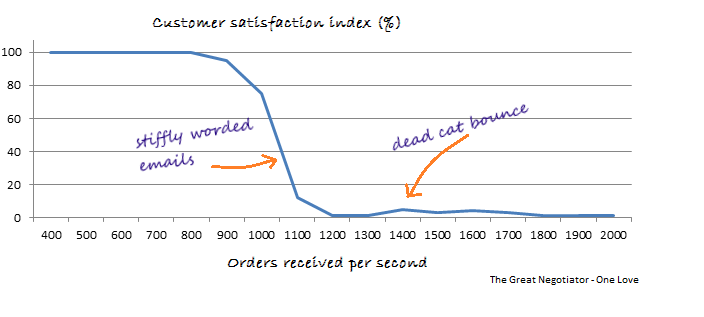

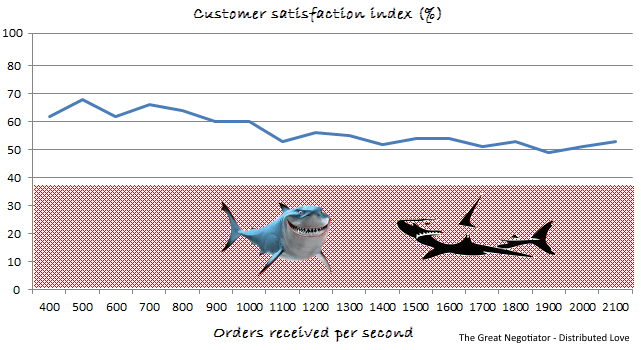

Happy days! “Wait till you see this!”, you think to yourself. Product is flying off the shelf, customers are sending Thank You! notes, and everyone’s a winner. Filled with confidence, you gradually ramp up the scale of simulated customer orders, plot the satisfaction index on a graph, and then you see this.

Disaster. The rate of order processing has dropped off a cliff. Convoys of ships carrying market-bound precious semi-conductor chips are stuck in holding circles off the coast of New Guinea, customers are getting irate and there’s a whiff of smoke coming out of the data-centre. You don’t need anyone to tell you that this is not a good look for a performance profile but sure enough, Dmitry pops up behind you. Looking over your shoulder, he remarks smugly “So… going well then. I hear you are demoing to the board later. Good luck with that. Oh, and I heard that JohnO, the London Desk boss, is around and he’s very interested in seeing your demo…”

Disaster. The rate of order processing has dropped off a cliff. Convoys of ships carrying market-bound precious semi-conductor chips are stuck in holding circles off the coast of New Guinea, customers are getting irate and there’s a whiff of smoke coming out of the data-centre. You don’t need anyone to tell you that this is not a good look for a performance profile but sure enough, Dmitry pops up behind you. Looking over your shoulder, he remarks smugly “So… going well then. I hear you are demoing to the board later. Good luck with that. Oh, and I heard that JohnO, the London Desk boss, is around and he’s very interested in seeing your demo…”

The world seems to grey out for a minute, not sure if your pulse is still ticking, and you can see Dmitry’s lips moving but it his voice seems to fade out. Then it fades back in and he’s saying “…but apparently he’s a bit jet-lagged, so the demo has been moved to tomorrow.”

Well thats a relief. Rumour has it that JohnO is a bit of a bruiser, keeps a punch-bag in his office, and thinks sensitivity is something to do with teeth. You’re going to need to pull your socks up on this one. Clearly at higher loads the Negotiator is struggling as a one-piece band, and making all the wrong noises. What you need to do is distribute the load. You need to make the Negotiator Great Again. So you revise your proposed solution to look like this.

Now the Negotiator has up-skilled as a conductor, and is orchestrating the load between child negotiators. As the load increases, the Negotiator births a new child negotiator, co-opting new machines if necessary, and hands it the extra work as required. This looks like it could work. To implement this you download the Akka.net framework from the good folks at Petabridge, and drop it into your solution. Looks like you are finally going to have to write some code, so you roll up your sleeves and tap out some code to distribute the load as and when required among multiple active child negotiators. Its gone 16:30 by the time you hit the run button on the simulator and you really don’t want to pull another all-nighter so this had better work. If it doesn’t, come the dawn the fat lady singeth. Thirty minutes later the results are in, and this is what you get.

Now the Negotiator has up-skilled as a conductor, and is orchestrating the load between child negotiators. As the load increases, the Negotiator births a new child negotiator, co-opting new machines if necessary, and hands it the extra work as required. This looks like it could work. To implement this you download the Akka.net framework from the good folks at Petabridge, and drop it into your solution. Looks like you are finally going to have to write some code, so you roll up your sleeves and tap out some code to distribute the load as and when required among multiple active child negotiators. Its gone 16:30 by the time you hit the run button on the simulator and you really don’t want to pull another all-nighter so this had better work. If it doesn’t, come the dawn the fat lady singeth. Thirty minutes later the results are in, and this is what you get.

Phew. Happy days are here again! Looks like you can now handle 1000’s of orders per second and still maintain a reasonable level of responsiveness. Granted this isn’t a stellar performance from the customer satisfaction index perspective, but then you did set yourself quite an ambitious target. And anyway, your watch can run rings around the old machine under your desk on which you are running this simulation. So imagine what greatness lies ahead when you can get this deployed to multiple beefy machines. Maybe you can even get your hands on a couple of those helium-cooled servers Elon Musk is building in his secret lab. If you do well at the demo tomorrow, you might be able to convince JohnO to shell out for some decent kit.

If you do well. Fingers crossed.

Full Project Files And Code Snippets here